I use chatgpt as a suggestion. Like an aid to whatever it is that I’m doing. It either helps me or it doesn’t, but I always have my critical thinking hat on.

If the standard is replicating human level intelligence and behavior, making up shit just to get you to go away about 40% of the time kind of checks out. In fact, I bet it hallucinates less and is wrong less often than most people you work with

My kid sometimes makes up shit and completely presents it as facts. It made me realize how many made up facts I learned from other kids.

And it just keeps improving over time. People shit all over ai to make themselves feel better because scary shit is happening.

I did a google search to find out how much i pay for water, the water department where I live bills by the MCF (1,000 cubic feet). The AI Overview told me an MCF was one million cubic feet. It’s a unit of measurement. It’s not subjective, not an opinion and AI still got it wrong.

Shouldn’t it be kcf? Or tcf if you’re desperate to avoid standard prefixes?

Everywhere else in the world a big M means million.

Yeah, shouldn’t that be Kcf, Kilo cubic foot?

Kilo is a small k as there wasn’t a person named that.

I think in this case it’s Roman numeral M

The only thing that would make more sense would be if the bill was in cuneiform.

Americans really using ANYTHING but metric, huh?

Except languages like French (mille)

And Irish – míle.

Yeah, that’s an odd one. My city does water by the gallon, which is much more reasonable.

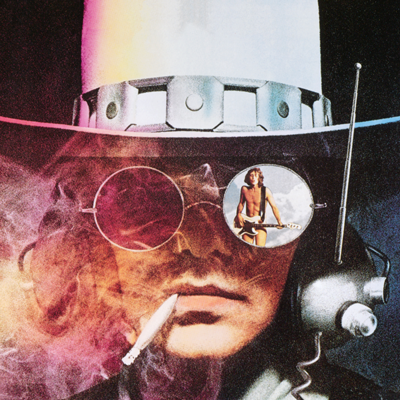

First off, the beauty of these two posts being beside each other is palpable.

Second, as you can see on the picture, it’s more like 60%

No it’s not. If you actually read the study, it’s about AI search engines correctly finding and citing the source of a given quote, not general correctness, and not just the plain model

Read the study? Why would i do that when there’s an infographic right there?

(thank you for the clarification, i actually appreciate it)

If you want an AI to be an expert, you should only feed it data from experts. But these are trained on so much more. So much garbage.

Most of my searches have to do with video games, and I have yet to see any of those AI generated answers be accurate. But I mean, when the source of the AI’s info is coming from a Fandom wiki, it was already wading in shit before it ever generated a response.

I’ve tried it a few times with Dwarf Fortress, and it was always horribly wrong hallucinated instructions on how to do something.

I love that this mirrors the experience of experts on social media like reddit, which was used for training chatgpt…

Also common in news. There’s an old saying along the lines of “everyone trusts the news until they talk about your job.” Basically, the news is focused on getting info out quickly. Every station is rushing to be the first to break a story. So the people writing the teleprompter usually only have a few minutes (at best) to research anything before it goes live in front of the anchor. This means that you’re only ever going to get the most surface level info, even when the talking heads claim to be doing deep dives on a topic. It also means they’re going to be misleading or blatantly wrong a lot of the time, because they’re basically just parroting the top google result regardless of accuracy.

One of my academic areas of expertise way back in the day (late '80s and early '90s) were the so-called “Mitochondrial Eve” and “Out of Africa” hypotheses. The absolute mangling of this shit by journalists even at the time was migraine-inducing and it’s gotten much worse in the decades since then. It hasn’t helped that subsequent generations of scholars have mangled the whole deal even worse. The only advice I can offer people is that if the article (scholastic or popular) contains the word “Neanderthal” anywhere, just toss it.

I’m curious. Are you saying neanderthal didn’t exist, or was just homo sapiens? Or did you mean in the context of mitochondrial Eve?

Sientists confirm it: we are living in a simulation!

Are you saying neanderthal didn’t exist, or was just homo sapiens? Or did you mean in the context of mitochondrial Eve?

All of these things, actually. The measured, physiological differences between “homo sapiens” and “neanderthal” (the air quotes here meaning “so-called”) fossils are much smaller than the differences found among contemporary humans, so the premise that “neanderthals” represent(ed) a separate species - in the sense of a reproductively isolated gene pool since gone extinct - is unsupported by fossil evidence. Of course nobody actually makes that claim anymore, since it’s now commonly reported that contemporary humans possess x% of neanderthal DNA (and thus cannot be said to be “extinct”). Of course nobody originally (when Mitochondrial Eve was first mooted) made any claims whatsoever about neanderthals: the term “neanderthal” was imported into the debate over the age and location of the last common mtDNA ancestor years later, after it was noticed that the age estimates of neanderthal remains happened to roughly match the age estimates of the genetic last common ancestor. And this was also after the term “neanderthal” had previously gone into the same general category in Anthropology as “Piltdown Man”.

Most ironically, articles on the subject today now claim a correspondence between the fossil and genetic evidence, despite the fact that the very first articles (out of Allan Wilson’s lab and published in Nature and Science in the mid-1980s) drew their entire impact and notoriety from the fact that the genetic evidence (which supposedly gave 100,000 years ago and then 200,000 years ago as the age of the last common ancestor) completely contradicted the fossil evidence (which shows upright bipedal hominids spreading out of Africa more than a million and half years ago). To me, the weirdest thing is that academic articles on the subject now almost never cite these two seminal articles at all, and most authors seem genuinely unaware of them.

There’s an old saying along the lines of “everyone trusts the news until they talk about your job.”

This is something of a selection bias. Generally speaking, if you don’t trust a news broadcast then you won’t watch it. So of course you’re going to be predisposed to trust the news sources you do listen to. Until the news source bumps up against some of your prior info/intuition, at which point you start experiencing skepticism.

This means that you’re only ever going to get the most surface level info, even when the talking heads claim to be doing deep dives on a topic.

Investigative journalism has historically been a big part of the industry. You do get a few punchy “If it bleeds, it leads” hit pieces up front, but the Main Story tends to be the result of some more extensive investigation and coverage. I remember my home town of Houston had Marvin Zindler, a legendary beat reporter who would regularly put out interconnected 10-15 minute segments that offered continuous coverage on local events. This was after a stint at a municipal Consumer Fraud Prevention division that turned up numerous health code violations and sales frauds (he was allegedly let go by an incoming sheriff with ties to the local used car lobby, after Zindler exposed one too many odometer scams).

But investigative journalism costs money. And its not “business friendly” from a conservative corporate perspective, which can cut into advertising revenues. So it is often the first line of business to be cut when a local print or broadcast outlet gets bought up and turned over for syndication.

That doesn’t detract from a general popular appetite for investigative journalism. But it does set up an adversarial economic relationship between journals that do carry investigative reports and those more focused on juicing revenues.

it’s much older than reddit https://en.wikipedia.org/wiki/Gell-Mann_amnesia_effect

i was going to post this, too.

The Gell-Mann amnesia effect is a cognitive bias describing the tendency of individuals to critically assess media reports in a domain they are knowledgeable about, yet continue to trust reporting in other areas despite recognizing similar potential inaccuracies.

I just use it to write emails, so I declare the facts to the LLM and tell it to write an email based on that and the context of the email. Works pretty well but doesn’t really sound like something I wrote, it adds too much emotion.

This is what LLMs should be used for. People treat them like search engines and encyclopedias, which they definitely aren’t

That sounds like more work than just writing the email to me

Yeah, that has been my experience so far. LLMs take as much or more work vs the way I normally do things.

40% seems low

that depends on what topic you know and how well you know it.

LLMs are actually pretty good for looking up words by their definition. But that is just about the only topic I can think where they are correct even close to 80% of the time.

yeah. some things I’d be shocked if they were correct 1% of the time. some things, like that, I might expect them to be correct about 80%, yeah.

I’ve been using o3-mini mostly for

ffmpegcommand lines. And a bit ofsed. And it hasn’t been terrible, it’s a good way to learn stuff I can’t decipher from the man pages. Not sure what else it’s good for tbh, but at least I can test and understand what it’s doing before running the code.In my experience plain old googling still better.

I wonder if AI got better or if Google results got worse.

Bit of the first, lots of the second.

True, in many cases I’m still searching around because the explanations from humans aren’t as simplified as the LLM. I’ll often have to be precise in my prompting to get the answers I want which one can’t be if they don’t know what to ask.

Are you me? I’ve been doing the exact same thing this week. How creepy.

we just had to create a new instance for coder7ZybCtRwMc, we’ll merge it back soon

This, but for tech bros.

Deepseek is pretty good tbh. The answers sometimes leave out information in a way that is misleading, but targeted follow up questions can clarify.

Like leaving out what happened in Tiananmen Square in 1989?

You must be more respectful of all cultures and opinions.

The amount of people who don’t realize this is satire reminds me of old Reddit

Is it though? I really can’t tell.

Poe’s law has been working overtime recently.

Edut: saw a comment further down that it is a default deepseek response for censored content, so yeah a joke. People who don’t have that context aren’t going to get the joke.

It got me, for whatever that’s worth.

Not everybody has heard every joke, buddy.

In my opinion it should have been the politburo that was pureed under tank tracks and hosed down into the sewers instead of those students.

It really is so convenient, there are so many CPC members, but they all happen to be near a conveniently placed wall that is more than enough.

The western narrative about Tiananmen Square is basically orthogonal to the truth?

Like it’s not just filled with fabricated events like tanks pureeing students, it completely misses the context and response to tell a weird “china bad and does evil stuff cuz they hate freedom” story.

The other weird part is that the big setpieces of the western narrative, like tank man getting run over by tanks headed to the square are so trivial to debunk, just look at the uncropped video, yet I have yet to see 1 lemmiter actually look at the evidence and develop a more nuanced understanding. I’ve even had them show me compilations of photos from the events and never stop to think “Huh, these pictures of gorily lynched cops, protesters shot in streets outside the square, and burned vehicles aren’t consistent with what I’ve been told, maybe I’ve been mislead?”

I just read the entire article you linked and it seems pretty inline with what I was taught about what happened in school. And it definitely doesn’t make me sympathetic to the PLA or the government.

Then your school did a better job of educating you than anyone talking about thousands of protesters getting ground into paste. Mine told me that tens of thousands of protesters were all blocked into the square, then tanks machinegunned them all down and ran them over, and the only picture to make it out of the event was Tank Man blocking the tanks from entering the square.

The point isn’t to make you sympathetic to the PLA, if you have a more nuanced understanding than “china killed 1000s of protestors because they fear and hate freedom”, you’re already ahead of 9/10 lemmitors, including the one I was responding to.

You can’t have a constructive discussion with someone whose analysis begins and ends with “china bad”, because they are incapable of actually engaging with the material beyond twisting any data into hostile evidence, and making up some if none is available.

classic lemmy ml

Is this a reference I’m not getting? Otherwise, I feel like censorship of massacre is not moraly acceptable regardless of culture. I’ll leave this here so this doesn’t get mistaken for nationalism:

https://en.m.wikipedia.org/wiki/List_of_massacres_in_the_United_States

It’s by no means a comprehensive list, but more of a primer. We do not forget these kinds of things in the hope that we may prevent future occurrences.

It’s a fucking joke FFS. It’s the standard response from Deepseek.

Oh, gotcha. Yeah, I’m not on board with that. Thanks for clarifying. I thought you were being sincere for a moment. This is good satire. Carry on, please.

Thank you, that provides context that was missing for the joke to land.

How dare they ask!

https://en.m.wikipedia.org/wiki/List_of_massacres_in_the_United_States

Huh, I used to make a joke about how there’s never been a “Bloody Monday” in history. I learn something new every day …

Ah dun wanna 😠

Are we calling the communist party of China and their history of genocide and general evil, some kind of culture now?

Can’t believe how hostile people are against nazis, we should have respected their cultural use of gas chambers.

Communism was never the problem, authoritarianism is the problem

The cpc is and has always been the definition of authoritarianism , and now it’s hyeprcapitalist authoritarianism.

You can get an uncensored local version running if you got the hardware at least

It censors 1989 China. If you ask it to not say the year, it will work

I think that AI has now reached the point where it can deceive people ,not equal to humanity.

Oof let’s see, what am I an expert in? Probably system design - I work at (insert big tech) and run a system design club there every Friday. I use ChatGPT to bounce ideas and find holes in my design planning before each session.

Does it make mistakes? Not really? it has a hard time getting creative with nuanced examples (i.e. if you ask it to “give practical examples where the time/accuracy tradeoff in Flink is important” it can’t come up with more than 1 or 2 truly distinct examples) but it’s never wrong.

The only times it’s blatantly wrong is when it hallucinates due to lack of context (or oversaturated context). But you can kind of tell something doesn’t make sense and prod followups.

Tl;dr funny meme, would be funnier if true

That’s not been my experience with it. I’m a software engineer and when I ask it stuff it usually gives plausible answers but there is always something wrong. For example it will recommend old outdated libraries or patterns that look like they would work but when you try them out you figure out they are setup differently now or didn’t even exist.

I have been using windsurf to code recently and I’m liking that but it makes some weird choices sometimes and it is way too eager to code so it spits out a ton of code you need to review. It would be easy to get it to generate a bunch of spaghetti code that works mostly that’s not maintainable by a person out of the box.

I ask AI shitbots technical questions and get wrong answers daily. I said this in another comment, but I regularly have to ask it if what it gave me was actually real.

Like, asking copilot about Powershell commands and modules that are by no means obscure will cause it to hallucinate flags that don’t exist based on the prompt. I give it plenty of context on what I’m using and trying to do, and it makes up shit based on what it thinks I want to hear.

This, but for Wikipedia.

Edit: Ironically, the down votes are really driving home the point in the OP. When you aren’t an expert in a subject, you’re incapable of recognizing the flaws in someone’s discussion, whether it’s an LLM or Wikipedia. Just like the GPT bros defending the LLM’s inaccuracies because they lack the knowledge to recognize them, we’ve got Wiki bros defending Wikipedia’s inaccuracies because they lack the knowledge to recognize them. At the end of the day, neither one is a reliable source for information.

The obvious difference being that Wikipedia has contributors cite their sources, and can be corrected in ways that LLMs are flat out incapable of doing

Really curious about anything Wikipedia has wrong though. I can start with something an LLM gets wrong constantly if you like

Do not bring Wikipedia into this argument.

Wikipedia is the library of Alexandria and the amount of effort people put into keeping Wikipedia pages as accurate as possible should make every LLM supporter be ashamed with how inaccurate their models are if they use Wikipedia as training data

TBF, as soon as you move out of the English language the oversight of a million pair of eyes gets patchy fast. I have seen credible reports about Wikipedia pages in languages spoken by say, less than 10 million people, where certain elements can easily control the narrative.

But hey, some people always criticize wikipedia as if there was some actually 100% objective alternative out there, and that I disagree with.

Fair point.

I don’t browse Wikipedia much in languages other than English (mainly because those pages are the most up-to-date) but I can imagine there are some pages that straight up need to be in other languages. And given the smaller number of people reviewing edits in those languages, it can be manipulated to say what they want it to say.

I do agree on the last point as well. The fact that literally anyone can edit Wikipedia takes a small portion of the bias element out of the equation, but it is very difficult to not have some form of bias in any reporting. I more use Wikipedia as a knowledge source on scientific aspects which are less likely to have bias in their reporting

Idk it says Elon Musk is a co-founder of openAi on wikipedia. I haven’t found any evidence to suggest he had anything to do with it. Not very accurate reporting.

The company counts Elon Musk among its cofounders, though he has since cut ties and become a vocal critic of it (while launching his own competitor).

With all due respect, Wikipedia’s accuracy is incredibly variable. Some articles might be better than others, but a huge number of them (large enough to shatter confidence in the platform as a whole) contain factual errors and undisguised editorial biases.

It is likely that articles on past social events or individuals will have some bias, as is the case with most articles on those matters.

But, almost all articles on aspects of science are thoroughly peer reviewed and cited with sources. This alone makes Wikipedia invaluable as a source of knowledge.

What topics are you an expert on and can you provide some links to Wikipedia pages about them that are wrong?

I’m a doctor of classical philology and most of the articles on ancient languages, texts, history contain errors. I haven’t made a list of those articles because the lesson I took from the experience was simply never to use Wikipedia.

The fun part about Wikipedia is you can take your expertise and help correct the information, that’s the entire point of the site

Can you at least link one article and tell us what is wrong about it?

How do you get a fucking PhD but you can’t be bothered to post a single source for your unlikely claims? That person is full of shit.

If this were true, which I have my doubts, at least Wikipedia tries and has a specific goal of doing better. AI companies largely don’t give a hot fuck as long as it works good enough to vacuum up investments or profits

Your doubts are irrelevant. Just spend some time fact checking random articles and you will quickly verify for yourself how many inaccuracies are allowed to remain uncorrected for years.

Small inaccuracies are different to just being completely wrong though

There’s an easy way to settle this debate. Link me a Wikipedia article that’s objectively wrong.

I will wait.

why don’t you then go and fix these quoting high quality sources? are there none?

Because some don’t let you. I can’t find anything to edit Elon musk or even suggest an edit. It says he is a co-founder of OpenAi. I can’t find any evidence to suggest he has any involvement. Wikipedia says co-founder tho.

https://openai.com/index/introducing-openai/

https://www.theverge.com/2018/2/21/17036214/elon-musk-openai-ai-safety-leaves-board

Tech billionaire Elon Musk is leaving the board of OpenAI, the nonprofit research group he co-founded with Y Combinator president Sam Altman to study the ethics and safety of artificial intelligence.

The move was announced in a short blog post, explaining that Musk is leaving in order to avoid a conflict of interest between OpenAI’s work and the machine learning research done by Telsa to develop autonomous driving.

He’s not involved anymore, but he used to be. It’s not inaccurate to say he was a co-founder.

Interesting! Cheers! I didn’t go farther than openai wiki tbh. It didn’t list him there so I figured it was inaccurate. It turns out it is me who is inaccurate!

There are plenty of high quality sources, but I don’t work for free. If you want me to produce an encyclopedia using my professional expertise, I’m happy to do it, but it’s a massive undertaking that I expect to be compensated for.

Many FOSS projects don’t have money to pay people

This, but for all media.